Position bias in features

What happens when model features depend on prior ranking

I’ve written before about ranking bias as it affects machine learning algorithms, which has a deep research literature behind it. Yet, similar problems can also affect the inputs to these models. This has a much lighter literature. My own take on it is in a new paper titled Position bias in features, in which I frame the issue my own way, bring together some observations from the sporadic literature with some of my own, and demonstrate a simple simulation. I’ll give a light overview here and share some of the fun findings.

Refresher on position bias

When building models in any setting that involves a ranking, the prior ranking of all of the items matters.1 Whether the product is Google Search, Amazon, Netflix, or almost anything else where items are ordered intentionally, there’s usually some element of predicting click or conversion where our labels have been affected by that very ordering that we subsequently need to optimize.2 Items that have historically been shown high up on lists will have received a lot of attention from users, and items buried deep down might never be seen at all.

For ML algorithms such as neural networks, GBDTs, or SVMs, there is a large literature on how to adapt the algorithms to perform accurately and unbiasedly in ranking settings. Typically this involves defining a structural model of how ranking affects user behavior, where positive outcomes are weighed higher if they came from lower (more disadvantaged) parts of the list. Approaches can be split into two general types: those that fit the ranking bias parameters alongside other parameters using the ML algorithm, and those that identify ranking bias externally with randomization experiments. I’m on the record liking randomization approaches (in this post and this paper), which have worked well for me, in contrast to some observational methods that have been hit-and-miss on my data. That said, I’m not fundamentally against the observational approach; I like methods that work, and those sometimes work.

Position bias in features

Position bias can make its way into our features when those features reflect prior user behavior. Consider one classic use case of rankings: song recommendations. One direction is to encode all of the aspects that customers care about into features: the genre, the artist, duration, title, various extracted or modeled features like pace and whether the song is upbeat, and so on. As creative as we can be, it might still be hard to represent every causal aspect of songs as features. Another approach is to look at a proxy for all of that information: do other people like this song?

We could use how many times the song has been played or liked in the past and use that as a feature. That type of feature might summarize all of the various causal elements. Usually we divide that by impressions (or another criteria for visibility), turning it into a percentage that represents how often users select an item when they have the opportunity to do so. These click-through rate (CTR) features are mainstays in ML.3

CTR features suffer from all the problems of position bias. Items from the top of a list will be chosen more often, even if they aren’t actually the best results. CTR will heavily advantage items that were ranked higher in the past, creating a self-fulfilling cycle where high ranked items stay at the top and low ranked items don’t have an opportunity to move up.

This is a problem even if the model algorithm itself adjusts for position bias. In my paper I show that in a reductive setting: I rank with only one feature, such that all models that learn a monotonic relationship with respect to that feature would create the same ranking. No amount of position bias adjustment in the model fixes the fact that its lone feature, which summarizes numerous past interactions, is biased as an estimator of relevance. In effect, the feature is less useful than if it had been constructed differently. The ML algorithm might learn an unbiased relationship between that feature and the label, but that can have limited usefulness if the feature itself is flawed.

Following the class of approaches where we use external estimates of position bias, we can unbias our features much like how we can unbias algorithms with inverse propensity weighting (IPW, or IPS).4 In this method, we weigh each positive outcome by how disadvantaged its position was. I call this IPW-CTR.

And that could be the whole story, which would be fairly unsurprising and not novel, but there are some very interesting results once you try this empirically.

Fun findings

1. It turns out that IPW-CTR has very high variance.

Dividing by small numbers (between 0 and 1) can create big numbers. That’s something we’ve surely all encountered by now, and we encounter it again with IPW-CTR. Items deep down in rankings have a very small chance of being considered, so we divide their clicks by that tiny likelihood. Those rare clicks can have a large impact on the feature, implying high variance. This is in sharp contrast to CTR, which, like any percentage, has a low variance and is calculable with tight bounds after only accumulating a moderate amount of sample.

The variance of IPW-CTR can destroy your ranking system. Items with very low ranking but with even a single click (or conversion) earn very high values for the feature. This is the intention for high quality items that have started low on lists, but it can also happen with some regularity for low quality items that were rightfully ranked lower.

While CTR is bound at 0 and 1, IPW-CTR has no inherent upper bound. For a known position bias factor of θ, the upper bound is 1/θ and the range and variance will be much larger than for CTR (because θ ≤ 1). For far more details along those lines, Schnabel et al. (2016) defines probabilistic bounds on the variance [1].

High IPW-CTR for an item means that such a low-ranked item may subsequently become placed higher up in rankings.5 A low quality item now shown prominently will be quickly corrected and moved down; although maybe not that quickly depending on the update frequency of the features. Regardless, that item was still shown prominently for some time, and the problem can quickly recur for other low ranked items. This will be a permanent problem if you have enough total volume and always have some influx of new documents.

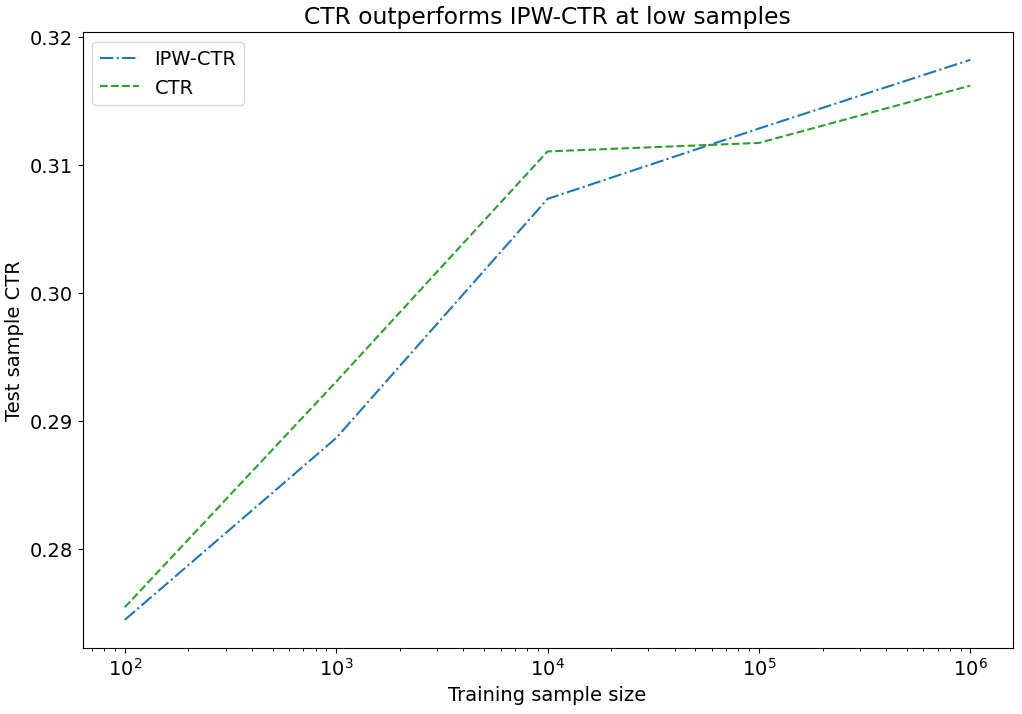

This high variance is not only a theoretical problem. In my paper I demonstrate through simulations in a realistic setting where impressions per item varies widely. At small amounts of searches, the unadjusted and biased CTR outperforms the adjusted and unbiased IPW-CTR, as measured by performance in future searches after updating the feature based on initial searches. In most of my simulations, CTR remains competitive with IPW-CTR at higher samples too.

2. There exists a spectrum of choices on a bias-variance curve

CTR is biased but low variance. IPW-CTR is unbiased but high variance. We can design features anywhere in between, with any chosen bias-variance trade-off.6 This is shown nicely in Swaminathan and Joachims (2015) [2].

And while we can literally trade-off through Swaminathan and Joachims’s feature with its hyperparameters, we can also choose from a variety of other CTR-inspired features that make their own choices trading off bias and variance. The features I discuss in my paper (IPW-CTR, COEC, and SNIPS) are partially understandable by their different choices on how much bias to trade-off for variance.

3. The optimal trade-off might have little dependence on how much position bias exists in the setting

In my simulations I drastically changed the amount of position bias in user behavior, yet often it had only moderate impact on the relative performance of biased and unbiased metrics.

With high amounts of position bias, unbiased metrics have high variance and might perform poorly in ranking. With lower amounts of position bias, the position bias adjustments of those unbiased metrics are less important because there is less position bias to account for. Either way, it is possible for a more biased feature to out-perform a less biased feature. It might be unintuitive that high amounts of position bias do not necessarily mean that we should choose a less biased feature, but it makes sense once you internalize that high position bias comes with high variance.

What mattered much more was sample size for all items. Higher sample usually helped IPW-CTR more than CTR, which is what we would expect given their variances.

This pattern could be a saving grace for people who aren’t thinking about position bias: they may have unintentionally chosen a point on the bias-variance trade-off that works for their low number of sample for at least some items.

4. The choice of weights is crucial

Thus far we have set aside the problem of how to actually measure position bias weights. My earlier post and other paper have some discussion of that topic. Yet the weights we use really, really matter. In my simulations, nothing else mattered as much: not the sample size, not the amount of true position bias, not the feature formula itself.

It’s very easy to get the weights wrong. An approach that might be our first instinct is to use observed click propensities by position. That’s exactly what I tested which went so poorly. Click propensity will almost certainly be a drastic overestimate of position bias. The observed pattern of clicks by position—that most clicks happen at the top of the search results in a relationship that decays rapidly—is caused by more than just position bias. It’s also caused by quality. The existing ranker, unless it’s a random one, probably does at least a serviceable job of ranking good items ahead of bad ones. We click on the top items more often not only because of our own attention span or trust in the rankings, but also because those items usually are the best ones.

These observed (“empirical”) weights performed far worse in every comparison I tried, against CTR, IPW-CTR, and other features such as COEC and SNIPS.7 It is very much worth it to improve your estimation of position bias.

Some final thoughts

Position bias in features is an underappreciated phenomenon. It can have a large impact on ranking systems. Ranking is a highly dynamic problem, where we have features that update in real-time or with a fixed cadence and models that also update regularly. Features and outcomes are endogenous to the system, affected by prior ranking, so we have causal implications across time. Given such complex settings, we should be modest about preferring any one specific feature or extrapolating from any setting to another.

While the ranking literature is focused on defining ML algorithms, where we ultimately have to pick a ranker, when it comes to features we aren’t limited to just one. To the question of whether we should pick CTR, IPW-CTR, COEC, SNIPS, or something else as our historical rate feature, the answer might be “Yes”.

[1] Schnabel, T., Swaminathan, A., Singh, A., Chandak, N., & Joachims, T. (2016). Recommendations as Treatments: Debiasing Learning and Evaluation. International Conference on Machine Learning.

[2] Swaminathan, A., & Joachims, T. (2015). The Self-Normalized Estimator for Counterfactual Learning. Neural Information Processing Systems.

In the recommendations literature these are usually called “items”, while in the position bias literature they are usually called “documents” regardless of how much they resemble traditional written documents. Nowadays, in the traditional ranking setting that is search engines, we’re more likely to be searching through SEO-optimized ad-infested listicles than anything resembling a traditional document.

“Click” being a catch-all term in the literature for positive outcome of choice, which could be a literal click, a conversion, or an appropriate positive action in other types of products.

A claim that I feel confident in, yet for which it is surprisingly hard to find evidence to evaluate quantitatively. If anyone knows of a good paper on this topic, please send it my way.

The S is for “scoring”. I usually use IPS as the acronym, since that is the more popular term, but I find IPW is more specific and fits better when written out.

This depends on how important the feature is, but the goal of engineering these features is to create ones that will be useful and influential in ranking. The actual effect may be less dramatic than shown in my paper due to the presence of multiple other features.

Note that this is not the same bias-variance trade-off as the one commonly discussed for model complexity. In our setting we’re talking about variance of a more literal feature vector, compared to how the variance in a large parameter model requires one to think about extrapolating across multiple distributions.

I didn’t show SNIPS results in the paper, for brevity and because its performance was similar to other features. Its performance seemed to vary more on its degree of bias-variance trade-off rather than improving or worsening that frontier.