Profit-maximizing experimentation regimes

Explicitly modeling our sample allocation and decision rules to optimize some metric of choice, rather than using arbitrary hypothesis testing rules.

Last time I wrote about making decisions with randomized experiments. Specifically, I critiqued policies of blindly using a p < 0.05 criteria. I suggested that the 0.05 threshold is often too stringent, and that a maniacal adherence to minimizing experiment false positives is unproductive. There was a lot covered in the post, a lot of claims that were illustrative without being exhaustive (or, for that matter, exhausting). At the end I promised follow-up posts on how to structure the problem. So here we go.

We’ll review one specific paper: Test & Roll: Profit-Maximizing A/B Tests, by Elea McDonnell Feit and Ron Berman [1].1 It has the clarity and practicality that I appreciate in a paper.

Let’s define an experimentation regime as a set of standards and behaviors for how we run experiments. We run many experiments, and our procedures should follow from a coherent set of beliefs. We choose our methods, such as sample size calculations followed by traditional null hypothesis A/B testing, and we choose our criteria for when to consider an experimental variation a success and deploy it as the standard method (“shipping” it).

The paper articulates one such coherent experimentation regime, but a very different one from traditional hypothesis testing. Importantly, it starts with an explicit goal to maximize profit. It’s not the only way to design an experimentation regime. Evaluating potential changes with 0-difference hypothesis tests, with a p-value threshold for shipping, is another procedure that is not necessarily profit maximizing. It would be an incredible coincidence if it was.

Their context is marketing, but the approach generalizes to other settings. So if “website design, display advertising and catalog tests” doesn’t resonate with you, imagine another relevant scenario. You could be testing recommendation engines to maximize clicks or conversions, in which case those clicks or conversions would be your metric of profit. Another framing for these types of problems is minimizing regret, which is equivalent to maximizing profit.2

Once you articulate that the experimentation program should maximize some cumulative metric, other results follow quickly. For starters, at the end of an experiment you should ship the treatment with the higher expected value of the metric. If you go into the experiment with no prior information or expectation on either A or B treatments, that means shipping whichever one has the best metric during the experiment. The p-value is irrelevant. Or if you want to translate that decision criteria into p-value terms, it’s that you would ship with a p-value < 0.5. Not 0.05, but 0.5.

There are some caveats to that. You might want a Bayesian prior in favour of an incumbent treatment. And that fits within the framework—we’ll get to that. For now let’s consider basic scenarios, like the marketing programs in the paper. You might be trying two versions of a digital ad, neither of which you tried before. You might not have any particular hypothesis in favour of either version. There is no cost difference between the two.3 Whichever one attracts the most users is your best guess at which one is better. You might not have statistical evidence to be highly confident that it is better than the other one, but it’s more likely to be better than to be worse. So that’s the one you should use in the future, and the profit-maximizing choice doesn’t need a p-value.

Another consequence that follows quickly is that experiment duration and sample allocation should be chosen to maximize profit. Because everything should be chosen to maximize profit (or whatever our metric of choice is). We should care about how much sample is used in the test and how much the experimental treatments might vary in outcomes.

The traditional approach to power calculations doesn’t do this. As the paper says, “the objective in hypothesis testing is to maximize statistical power while controlling type-I error, while we focus on maximizing profits”. Sample size calculators can even recommend more sample than exists; if we followed them literally then it wouldn’t matter which treatment wins because we would never reach a point of choosing between the treatments. I believe a lot of practitioners recognize this limitation and hack around it, by working backwards from available sample size to find some combination of alpha, beta, and detectable effect size that suits them. This working backwards isn’t done with a quantified calculation, so it has all the problems of any hand-tuned optimization.

The model

There are N total customers. We will pick n1 customers to show the A treatment to and n2 to show the B treatment to. This is the “test” stage. At the end of the test stage, we evaluate the data from the tested customers, making a decision on which treatment is best. We ship the winning treatment, and the last N - n1 - n2 customers receive that treatment.

Profit in the test phase is:

Profit in the roll phase is:

We want an experimentation program that maximizes the sum of those equations. The choice parameters we focus on are n1 and n2, how many customers to expose to each treatment during the test. “Designing the test entails selecting the sample sizes n1 and n2 that maximize the total expected profit“. High n1 and n2 can be costly, because one treatment will be worse and we would like to minimize how many customers we treat with it during the test stage. However, high n1 and n2 also add value, because that increases the likelihood we make the correct decision and treat the remaining customers with the better treatment in the roll stage. “Thus, the test & roll framework sets up an explicit trade-off between learning during the test phase and earning during the roll phase“—a classic explore-exploit problem.

In Section 3 the paper goes into detail on one descriptive yet relevant case: symmetric normal priors. The treatments will have mean outcomes m1 and m2, respectively, with variance over customers of s2 (for both). m1 and m2 will differ, but they’re drawn from the same normal distribution with mean μ (mu) and variance σ2 (sigma squared). In English: We don’t know in advance which treatment is better, we know the spread of customer outcomes, we know the typical level of our metric, and we know how much that metric varies across different treatments in general even if we don’t yet know what value these specific treatments have.

Expected profit in the test stage is (n1 + n2)μ, meaning the expected average profit is μ. The decision criteria if the treatments are equally sized is just δ(y1, y2) = I(y1 > y2), where y are average outcomes and I is the indicator function.

Expected average profit in the roll stage exceeds μ because we have a better than even likelihood of picking the best variant.

Optimal n1 and n2 are shown by the authors and have interesting properties:

n1 = n2 (in this setting with neutral priors)

n1 + n2 < N (always)

n1 and n2 grow with s, because higher s means we have less certainty for any given test sample

n1 and n2 decrease with σ. σ2 is the variance in outcomes from treatments in general. If σ is large, we have a larger likelihood of seeing dramatically different results in the test stage, so we are more likely to have certainty about which treatment is better. Furthermore, that dramatic difference means that exploitation pays off more—or, equivalently, that testing is costly—because one treatment will be worse than the other.

The authors determine expected regret relative to an omniscient practitioner who knows the better treatment ahead of time with no uncertainty, shown in equation 14. This regret is O(√N), meaning that regret grows proportionally to the square root of sample available. This is dramatically better than regret for the null hypothesis regime, which is Ω(N), asymptotically lower bound by N. For profit-maximization, it makes sense that a profit-maximizing rule will create much more profit than a rule tuned for a specific trade-off of experiment false positives and experiment false negatives.

Extension to asymmetric priors

Naturally you might want to favour one treatment, perhaps an incumbent. It might have survived previous experiments. You want to give it an advantage, and you may also have previous data on how it performed. This still fits in the general profit-maximization framework and the specific model presented. Section 4 of the paper goes into detail. These priors will affect optimal sample size, and they also are used in the decision criteria. That decision criteria is still to pick the treatment with the best expected outcome, but now that expected outcome is informed by priors.

The authors wrote that:

The typical null hypothesis test in (1) provides no guidance on which treatment to deploy when the results are not significant. Many A/B testers advocate deploying the incumbent treatment (if there is one) in the interest of being “conservative”

Conservatism can make sense. We do have prior information. We also might have costs in effort, complexity, or tech debt. Another hard-to-measure cost could be the spillover effects on customers of continually changing the product. But we can get conservatism in a structured manner, through an optimization equation, rather than tuning null hypothesis parameters through trial and error over many experiments.

Comparison with multi-armed bandits

Throughout the paper, multi-armed bandits (MABs) are the elephant in the room. In a MAB, throughout the experiment we adjust the proportions of customers for each treatment. Specifically, with the Thompson sampling mechanism for MABs we assign to each treatment in proportion to the likelihood that it’s the best treatment. If we have no priors, then both treatments start with equal traffic, a 50/50 split.4 As one starts to perform better than the other, we assign it more traffic. MABs smoothly blend exploration and exploitation.

The Test & Roll authors compare their method with MABs several times. Both Test & Roll and MABs use a similar structured framework to experiment design, maximizing profit and (equivalently) minimizing regret. “Effectively, the problem we define can be seen as a constrained version of a multi-armed bandit, where there are only two allocation decisions instead of many”. Those two allocation points are at the start of the experiment, at which time we pick n1 and n2, and when transitioning stages from test to roll.

Having only two decision points, instead of continual optimization, implies that Test & Roll will be “sub-optimal relative to a multi-armed bandit”. However, those limited decision points are also a feature, as they result in “a transparent decision point and reduced operational complexity”. You define allocations once and have a known end to experimentation, and then you can move on from experimentation with a single winning treatment. Bandits also “can be difficult to execute when the response is not immediately observable (e.g., sales) or when the treatments are sent out in batches”.

Regret in Test & Roll is O(√N), which is also the order of regret for multi-armed bandits with Thompson sampling. Test & Roll has higher regret, but it scales similarly with N.

Whether we should pick the operational simplicity of Test & Roll or the efficiency of a multi-armed bandit depends on the circumstances. Nor are these the only reasonable experiment regimes to choose from.

Experimental results

The paper evaluates their method with three applied settings. Let’s discuss the first. From one of their earlier papers they have a dataset of 2,101 real-world experiments run on the Optimizely platform. They use this corpus to pick parameters for the case with balanced normal priors.

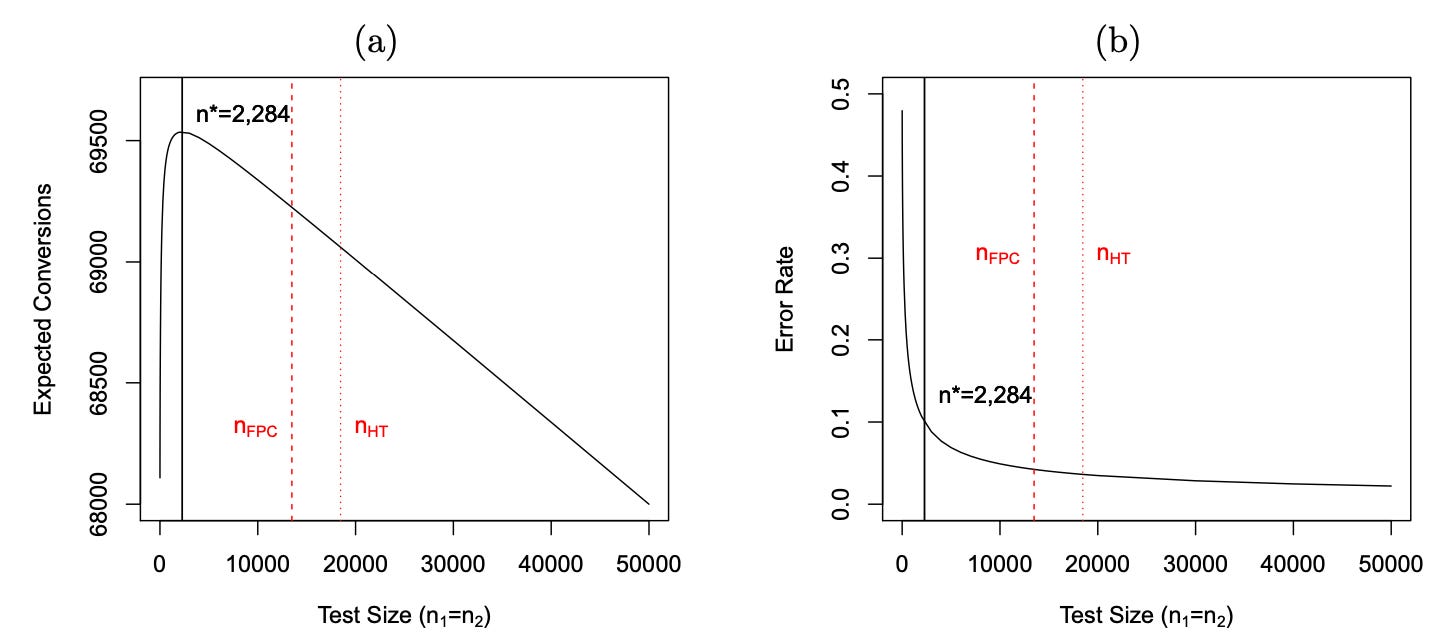

Figure 3 compares Test & Roll allocation versus traditional hypothesis testing-driven experiment regimes. They show the traditional method both with and without adjustments for population size.

Test & Roll allocates far less sample to test, with much higher expected profit, as shown in 3a. 3b shows that this (necessarily) leads to less certainty about the winning treatment and has a higher error rate. In the paper’s context of a fixed N, that higher error rate is worth it (as shown directly in 3a). The goal isn’t to always be right, but rather to be as profitable as possible. On the contrary, the way to maximize knowledge is to never stop the experiment, accumulating fairly even sample in both treatments and never committing to a winning one. Which would be a disastrously unprofitable way to run a business.

Table 1 contrasts the performance of those three methods, alongside three others: random (test-free) treatment, the omniscient case of knowing the better treatment, and a MAB. The MAB has very little expected regret, much lower than Test & Roll, but Test & Roll handily beats the two hypothesis-test driven approaches.

The authors show similar results (in terms of rank ordering) for the other two applications. This first application was the least flattering for Test & Roll both relative to MAB and to hypothesis testing.

Limitations

Test & Roll isn’t the only reasonable experimentation regime. It might not even be the best one covered in its own paper, given the authors’ discussion and empirical comparison with multi-armed bandits.

There are some limitations. Having two decision points naturally results in less optimization than a multi-armed bandit. Knowledge and assumptions of parameters are another challenge, especially if wanting to incorporate informed priors. The context of a known and fixed N is yet another; we might reasonably want longer experiments if N has the potential to grow to an unknown size. And all of these methods assume stationarity in underlying user behavior.

The world is complex and hard to distill into a simple model. But Test & Roll has real advantages, especially compared with fairly arbitrary regimes of hypothesis testing combined with judgment. The paper is a nice read. It’s clear, it presents a coherent framework, and the authors demonstrate that framework with useful experiments. Most of all, I like how the experiment regime was clearly inspired by a practical setting. That’s the Simplicity is SOTA way.

If you liked this post, you might like its predecessor, p < 0.05 considered harmful. You might also like a tangentially-related trilogy I wrote earlier: Measuring the impact of marketing, When RCTs aren’t enough for marketing measurement, and Human dynamics of marketing measurement.

[1] Feit, E.M., & Berman, R. (2018). Test & Roll: Profit-Maximizing A/B Tests. Marketing Science.

Every quote in this post comes from that paper.

As long as you coherently define regret and profit. You could define regret as the missed profit relative to omniscience, or profit as negative regret.

You can also measure costs and include them directly in your profit metric, a point made in the paper.

MABs are particularly popular when there are many treatments. But, since the Test & Roll paper discusses the simplest case of only two treatments, let’s stick with that for comparison.