When RCTs aren't enough for marketing measurement

Experimenting on advertising spend is the beginning, not the end, of understanding marketing effectiveness

In my last post I gave some background on marketing measurement. I made the case for two claims: that causal value from marketing programs differs substantially from top-line advertising revenue, and that it is very hard to measure causal value without randomized experiments. In this post I want to explain some challenges that will still persist even after random trials.

But as a quick aside, in the time since I wrote the last post I found someone else who covered the same papers and probably did it better! If you’re craving more on this topic, please take a look at this great write-up by Scott Cunningham.

Using incrementality

When we attribute revenue from users to the advertising channel that we used to acquire them, that disregards the concern that some users may have arrived and spent on our service even if they hadn’t been targeted by ads. Incrementality is causal revenue as a ratio of total attributed revenue.

We might target a profit maximizing amount of marketing, or a per-user profitability estimate, or we might maximize user growth subject to some budget constraint. Without incrementality measurement, we overestimate the profitability of our marketing programs. For whatever our goal is, we’ll overestimate the return to the program and spend more than if we had accurate incrementality measurement.

Take an advertiser that tries to always target visitors who are profitable at the margin. Revenue from each user, minus the cost of the ad that brought in the user, is a non-incremental calculation of net profit. If we could make our bidding decisions independently for each user, we would be willing to pay any amount below the value returned by that user. If we aim to target any users who would be profitable to target, yet we overestimate the revenue side of their ledger, then we will acquire some users at a loss. We might be blissfully unaware that those customers lose us money, but that doesn’t make it okay.

If we know incremental revenue per user who clicks on an ad then we can more accurately calculate the amount worth paying to acquire them. If we expect a new user to bring $20 of revenue (assuming no marginal costs to having them as a user), but they only have 50% incrementality, then it is only worth spending up to $10 on an ad to reach that user. Another way of looking at it is that there could be a pool of users who will spend $20, and half of them only come to the website if they see an ad, while the other half would come anyways. It isn’t worth spending $20 on each of them.

With a randomized control trial (RCT) we can identify the incrementality of the marketing program. But we still face some obstacles.

Incrementality is a curve, not a point

In the studies I discussed in my last post the experimenters compared spending at two levels: the current campaign parameters, and zero.

These are very different amounts. Odds are that neither is the optimal spending amount. If the ideal spending amount is somewhere in the middle, a single experiment might not be a very helpful guide.

That single experiment might give a good estimate for program incrementality at its current size. Yet that result might not extrapolate to larger or smaller programs. Establishing that a marketing program has 10% incrementality is not the same as knowing that the next dollar spent will have 10% incrementality.

Advertisers may implicitly rely on an assumption of constant incrementality. A more realistic model is diminishing incrementality. Compared to not advertising at all, the first set of ads reach the most promising audience. If, on the other hand, when advertising heavily, marginal spending might result in showing ads over and over again to the same people, oversaturating them.

The chart shows a hypothetical relationship between size and incrementality. The blue line shows the incrementality of the next user, and the orange line shows average incrementality for a campaign of that size. With diminishing marginal incrementality, average incrementality is always higher than marginal incrementality.

See this excellent post by Donald Hui about advertising during his time at Wish, where they advertised so thoroughly across every channel that smaller channels had “little incrementality and tons of difficulty scaling” [1].

The flatness of the curve, or the lack thereof, has important implications for a marketing campaign. A horizontal curve means we have constant incrementality. A steep curve means incrementality drops rapidly. If we use an advertising pause in an RCT to estimate incrementality while assuming that the result can be used for marginal advertising decisions, a true (unobserved) steep curve will imply that we overvalue marginal ads and likely buy them at a large loss.

Deriving a function is harder than deriving a constant. We cannot run an RCT with infinite variations of spending. We can alleviate this problem slightly by trying a variety of spending amounts, but if our variation is over geographies then there are limits on how small we can divide regions. If our variation is across time, then we might reach incorrect results if the incrementality curve itself changes over time or varies by season.

Limited data range

Related to the challenge of learning an incrementality function, but also more general, is that we might not have much variation in marketing spend.

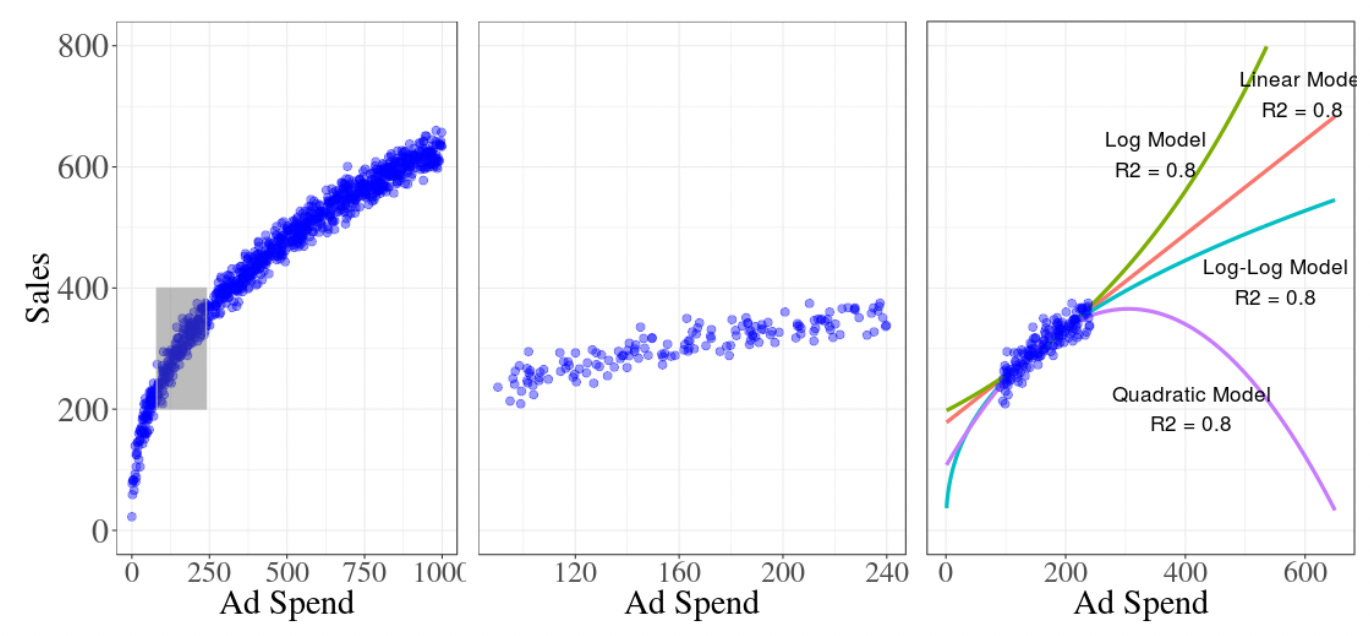

Researchers at Google wrote a good summary of advertising challenges, which included this helpful diagram demonstrating the problem of limited data range [2].

The left panel shows a hypothetical relationship between spend and sales. The middle one shows how limited our viewpoint of the curve is if we only observe a small variety in spend. The third panel shows how a variety of models could fit that small range but have wildly different extrapolation.

Even within the small data range, what variation exists might be due to seasonality or market size, rather than random variation. It is continually hard to tune marketing programs as their size changes substantially.

Incrementality with multiple channels

The same users might be reached repeatedly through various advertising surfaces (“channels”), including Google Search, Facebook, Bing, YouTube, mobile app ads, streaming TV, good old fashioned network TV, and more, potentially with multiple mechanisms for advertising for each. See again Donald Hui’s post where he describes using all products and formats available on each large channel, and operating on 10-20 different channels at a time [2].

The channels may be fundamentally different in their user behavior, and we may want to measure incrementality at a channel level. This is complicated by the substantial possibility of reaching some of the same users across multiple channels.

Uber wrote a study on measuring advertising lift, and on this topic of multiple channels they imply that pausing one channel could lead to cannibalization by other channels. They say ”the risks of channel cannibalization might bias the experiment” [3]. Yet I see it as depending on what they want to measure. Pausing one channel tells you what would happen if you stopped advertising on that channel but kept all the others. Avinash Kaushik at Google calls this “channel-silo incrementality” [4]. I interpret that as the channel’s incrementality as a marginal channel, holding the other channels fixed. That’s useful for understanding how valuable a channel is, in the presence of other channels. This is very helpful if you want to consider leaving a channel as a whole, perhaps due to fixed costs or the overhead of managing it.

I’m not sure what the alternative is. Imagine pausing all channels and then experimenting with each one individually, without any other active channels. That would tell you the effectiveness of each channel if it was the only one. But I don’t see that as a more valid or less biased form of incrementality. That would be helpful if we had to choose exactly one channel to keep, but that isn’t usually the pertinent choice.

We must choose which hypothetical to consider when evaluating incrementality of one channel: one in which other channels exist or one in which they don’t. Both of these hypotheticals are valid, and their results differ when incrementality is a varying function rather than a constant. Two measurements that interest me are average incrementality of each channel holding the others fixed (“channel-silo incrementality”), and incrementality of the program as a whole (“marketing-portfolio incrementality”). The former can be tested by pausing spend for each channel in its own experiment, and the latter can be tested by pausing spend in all channels. If treatment varies by geography, the latter is a lot harder because it requires coordination across geographies.

Wrapping up

In my last point I stressed that understanding incrementality is important to optimized marketing, and that randomized methods are more reliable than observational deductions. Now I hope I’ve given a glimpse into how complicated marketing measurement can be even if we can run accurate experiments.

[1] Donald Hui. (2022, July 27). Nuances of Marketing at Scale. Retrieved from https://donaldhui.substack.com/p/nuances-of-marketing-at-scale.

[2] Chan, D., & Perry, M. (2017). Challenges and Opportunities in Media Mix Modeling.

[3] Barajas, Joel & Zidar, Tom & Bay, Mert. (2020). Advertising Incrementality Measurement using Controlled Geo-Experiments: The Universal App Campaign Case Study.

[4] Avinash Kaushik. (2021, January). Your measurement resolution for 2021: Get a grip on incrementality. Retrieved from https://www.thinkwithgoogle.com/intl/en-ca/marketing-strategies/data-and-measurement/marketing-incrementality/.