Balanced samples are good, balancing samples is bad

We should lower our expectations from balancing. Sampling might not improve class separation, and even if it does, it will typically come at a cost to calibration and log loss.

We often have imbalanced datasets, where we have more of one class than another. In the example of a binary classification problem, suppose either the positives or negatives are far more common than the other class, rather than having a 50/50 split. We often have to predict outcomes that are present only 10%, 1%, or any degree of rarity. It makes sense that this happens, since for most types of data there is no particular reason why we would expect natural balance between groups.

I’ve talked with a lot of people who want to create a 50/50 balance before modeling by oversampling (randomly or synthetically) the minority class, undersampling the majority class, or both. They shouldn’t jump so quickly to do so, and they often want to for the wrong reasons.

Balanced datasets are good

To the extent that class is a fundamental distinction in our dataset and we have diminishing returns to sample for each class, balanced datasets give us the most information for any fixed sample size. Let’s say we want to classify phishing in emails, but we only have 1,000 emails, and even worse, only 10 of them are known phishing emails. We will have a serious modeling problem. There are only 10 phishing emails to generalize from — our entire knowledge of what phishing looks like can only come from those 10 emails, so we are limited by randomness and the curse of dimensionality. Which attributes of those emails will apply to all phishing emails and which are randomly unique to that corpus?

I would gladly trade one non-phishing email for one more phishing email. That would give me 10% more examples to learn about phishing (11 instead of 10), at a cost of just over 0.1% (1 of 900) of my non-phishing emails. Great trade. I would make that trade again, and I would happily keep trading until I had closer to 500 phishing emails and 500 safe emails.1

If I was told how many observations I could have and I could choose the composition without any prior knowledge of the domain, I would typically be happy with a 50/50 split.

Balancing samples is bad

Techniques for balancing datasets

The standard toolset for balancing includes random oversampling, random undersampling, and SMOTE. Oversampling means to duplicate some of the observations from the minority class. Undersampling discards some of the observations from the majority class. Aside from any prediction benefits from undersampling, it has the additional benefit (in contrast with oversampling methods) of making model fitting faster.

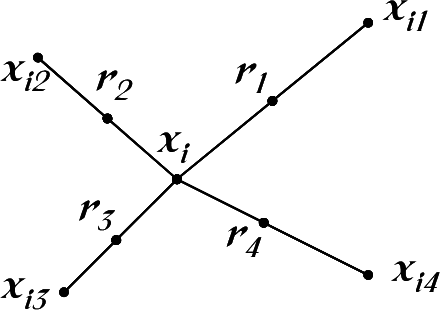

A big limitation of simple random oversampling is that we only end up with the same data points repeated multiple times. The model can be increasingly specific and confident in predicting those exact points rather than learning general relationships. From the original SMOTE paper, in the context of decision trees but applicable to other algorithms too, “if we replicate the minority class, the decision region for the minority class becomes very specific […] in essence, overfitting” [1]. SMOTE solves this with a straightforward technique: it generates new fake (“synthetic”) data that sits in between real points from the minority class.

As the authors elaborate, “the synthetic examples cause the classifier to create larger and less specific decision regions”.

SMOTE and its descendants have been popular. The method is simple (although in the descendants, less so) and causes large shifts in model predictions, sometimes for the better.

But at what cost?

Balancing does not fix information problems

As emphasized earlier, naturally balanced datasets are ideal because we have more information to work with. With the example of only 10 phishing emails in a corpus, no sampling technique will save us. We can create synthetic permutations of the 10 phishing emails, and our ML algorithm will certainly do something given this data, but this won’t magically solve the underlying problem. Those data points can inform our judgment about phishing, but any action we take on them will rely on a large assumption about the generalization of those 10 data points. The general solution isn’t easy: collect more observations. Or find another dataset or an existing model to adapt.

Might not lead to better separation

One common motivation for upsampling is when we have uniform classification. Suppose we build our phishing classification model and it classifies each email as non-phishing. It has an accuracy of 99%, predicting correctly on the 99% of emails that are non-phishing and incorrectly on the 1% of emails that are phishing. This isn’t a useful classification. But it may still be a useful model.

Most classification algorithms create underlying probabilities. Classification may happen in a final layer or threshold rule that assigns the most likely class. Yet we can still take action even when the probability is less than 50%. We might want to take action to prevent phishing even if there is only a minority chance the email is an actual phishing attempt. Choosing the most likely class is only always the right option when costs are symmetric. For many use cases we care more about class separation than about the accuracy of the most likely classification.

To SMOTE, or not to SMOTE? is a nice study. They ask whether SMOTE and other oversampling methods actually improve separation when we account for the ability to choose a threshold. Using 73 datasets, seven algorithms (LGBM, XGBoost, CatBoost, SVM, MLP, decision trees, and AdaBoost), and F1 and AUC as metrics, they find that “while oversampling the data is beneficial when using a fixed threshold, it does not improve prediction quality when the threshold is optimized” [3]. Their very best model didn’t involve any balancing.

I’m a little more positive about their results. For some of the algorithms they tested, SMOTE did much better than a regular sample, on others the metrics were very similar across methods, and there weren’t examples where SMOTE did significantly worse.

One bigger takeaway is that algorithm choice made a larger difference than the sampling method. Here’s Figure 1b from the paper, where the purple circles are AUC results on validation samples.

As the paper states, “the strong classifiers (without balancing) yield better prediction quality than the weak classifiers with balancing”. Citing other research, they emphasize other causes of poor performance that are prevalent in imbalanced data but not strictly about balance, including “small sample size, class separability issues, noisy data, data drift and more”. The paper is also, coincidentally, a nice demonstration of the robustness of boosted tree methods across many datasets and the high variance of untailored MLPs.

Many of the studies in which balancing improves performance use models that are very weak, even by the standards of the time. For example, consider López et al. (2013), in which oversampling methods show performance improvements over single decision trees, SVMs, and kNN models [4]. Even more-so than SMOTE, some of its more complex descendants may add value through more total learning, compensating for weak subsequent learners. SMOTE is old (a compliment — it has staying power!) and many papers live in a world literally before XGBoost. Other papers are more recent and only live figuratively in a world without XGBoost.

Balancing destroys calibration

Usually our algorithms have good calibration properties. With artificial samples, our probabilities may be miscalibrated and lose their intrinsic meaning. This might be a problem if we don’t only need to classify, but also need accurate probabilities for some other purpose. Elor and Averbuch-Elor (2022) demonstrated that “in all cases, balancing the data results in better logloss for the minority samples, worse logloss for the majority samples and worse logloss overall”. That log loss degradation was large and statistically significant. Sampling techniques that artificially change the input distribution also have other problems about interpreting the model and comparing results across samples.

Sometimes I see claims that algorithms are biased in favour of the majority class, or they have a framing like "accuracy does not value rare cases as much as common cases" [6]. That’s not how I look at it. Algorithms are typically unbiased across observations. Each observation is valued exactly as much as every other observation. They treat each observation as equally important and they minimize aggregate loss. In cases of representative samples generated through a simple observational or random process, what else would we want algorithms to default to?

Instead it is often the modeler who wants to add bias, preferring to improve performance on some observations at the expense of others. This is often perfectly reasonable, when prediction may matter more on the minority class than the majority class. This is a loss function problem.2 We can use cost-aware loss functions, including the special case of class-balanced weights when we prefer equal performance across all classes regardless of their prevalence. We don't have to create artificial data or overrepresent specific data points.

Unsuitability for some datasets

One downside of SMOTE is that its synthetic data points are not real and might not even be possible minority class outcomes. SMOTE might construct many synthetic minority class observations through regions in feature space that truly belong with the majority class. The subsequent classifier doesn’t even know which data points are actual data versus plausible-but-fake data.

That caveat does not imply that using SMOTE is always a bad idea.

I appreciate how Weiss uses an inductive bias framing, and I would like to find a paper that extends this conceptual framework and discusses balance with No Free Lunch Theorem (NFL — the less famous one). The NFL shows how any modeling choice cannot be best for all possible inputs. With that true, we have to make choices on what types of relationships between inputs and predictions are possible and preferred in our models. SMOTE has a big inductive bias, meaning it makes a big bet, on relationships between inputs and labels being continuous in feature space. It bets heavily on the chance that (unobserved) data between same-class observations are much more likely to belong to that class than to others.

The reason SMOTE often succeeds is because most predictive problems really are fairly continuous rather than dramatically discontinuous, and SMOTE is just one of many algorithms that has been effective with a large bet on continuity. When SMOTE is followed by a weak subsequent classification algorithm, that continuity inductive bias can be very worthwhile. When the problem space differs, this inductive bias can be damaging.

The bottom line

Despite the title, I’m not always against balancing. Undersampling can be a practical choice when we have technological limitations on sample size for fitting models. Oversampling methods can lead to better model performance in many cases. Data augmentation methods more generally have rich active applications [5]. What I’m against is any knee-jerk assumption that imbalance means we need to rebalance.

We should lower our expectations from balancing. It usually won’t be the difference maker for model performance. There’s a reason we don’t typically see balancing in recent SOTA classification models. Choice of model, choice of hyperparameters, feature construction, and other decisions may all be much more consequential.

Even if sampling improves our class separation, it will typically come at a cost to calibration and log loss. It adds computational and interpretative complexity that may not be worthwhile, especially through subsequent model iterations.

Balance at your own risk, and check whether it helps.

[1] Chawla, N., Bowyer, K., Hall, L.O., & Kegelmeyer, W.P. (2002). SMOTE: Synthetic Minority Over-sampling Technique. J. Artif. Intell. Res., 16, 321-357.

[2] Fernández, A., García, S., Herrera, F., & Chawla, N. (2018). SMOTE for Learning from Imbalanced Data: Progress and Challenges, Marking the 15-year Anniversary. J. Artif. Intell. Res., 61, 863-905.

[3] Elor, Yotam & Averbuch-Elor, Hadar. (2022). To SMOTE, or not to SMOTE?.

[4] López, V., Fernández, A., García, S., Palade, V., & Herrera, F. (2013). An insight into classification with imbalanced data: Empirical results and current trends on using data intrinsic characteristics. Inf. Sci., 250, 113-141.

[5] Weng, Lilian. (Apr 2022). Learning with not enough data part 3: data generation. Lil’Log. https://lilianweng.github.io/posts/2022-04-15-data-gen/.

[6] Weiss, G.M. (2004). Mining with rarity: a unifying framework. SIGKDD Explor., 6, 7-19.

[7] Weiss, Gary M. & Provost, Foster. (2003). Learning when training data are costly: the effect of class distribution on tree induction. J. Artif. Int. Res. 19, 1 (July 2003), 315–354.

Observation value is not quite as simple as that. It depends on the feature diversity within the samples, which could vary between classes. Weiss & Provost tested this on a sample of datasets [7]. Judging by AUC (Table 6), best-performing imbalance ratios varied by dataset, often but not exclusively being fairly balanced.

Note that this permits the possibility of the majority class mattering more than the minority class, in which case the rebalancing approach would be further imbalance the dataset. That example should make it clear why balance isn’t intrinsically ideal for difference in loss costs.