Claude Code for data work

Claude Code is a coding tool, but it’s also an everything tool

We’re only as good as our tools, and it’s time to upgrade those. If you haven’t done so yet, learning Claude Code (or a competitor like Codex) is worth your time. These tools have improved immensely in the last few months, and for many professions the way we used to work will be unrecognizable to the new generation in our fields.

Let’s walk through the first three data projects that I used Claude Code for, and some tips & tricks I’ve learned through those. The projects cover a good range: a Python-based rating algorithm, a qualitative research report, and a SQL-based analysis pipeline. That variety is intentional; I wanted to test CC on some very different tasks, and stretch my own agentic engineering skills through different problems.

I’m not going to give a full explainer of what Claude Code is, or cover all of its functionality. There are other excellent resources for that, as well as vivid explainers for how Claude Code is changing workflows for other people. Instead I’ll give a condensed overview before explaining how I’ve used CC for some typical data projects, so you can better relate to how AI tools can be helpful.

In brief, Claude Code is an interface you run locally that connects to Claude AI models (Opus and Sonnet), with good built-in capabilities for actions like reading and updating files on your machine. You typically use it in a terminal, where it displays (with colour highlighting) chunks of code or text it will modify, and regularly prompts you for next steps or permissions. You could also run Claude Code inside your IDE, or you can use Claude Cowork as a non-terminal GUI for the same functionality.

Combining a broad set of terminal commands with access to strong LLMs makes for a very powerful and generalizable system. As I wrote before, agentic AI runs on tools, and Claude Code is a powerful demonstration of that principle. Anthropic’s competitors have followed suit with this form factor, such that you have OpenAI’s Codex, Google’s Gemini CLI, and the open source OpenCode among the alternatives. I encourage you to give one of these a whirl if you haven’t already. Claude Code has a shallow learning curve, as you can be effective almost immediately and your skill with it grows quickly and organically. Just follow the installation instructions, pay for Pro membership (or use API keys or use the version your workplace provides), and run “claude” in a folder that has code or something else interesting in it.1 Then start chatting with the LLM or give it a “/init”. Don’t feel too pressured to learn all of the other functionality right away or ahead of time.

Project 1: Sudoku rating system

I run a small sudoku website, sudokudos.com. It’s not for playing Sudoku puzzles or participating in competitions, but rather it showcases historical results from the World Sudoku Championship and the Sudoku Grand Prix. It has some colourful charts instead of just the data tables that those competitions provide, and getting all the data together in one place made it possible to visualize progression for individual solvers (that’s the term we use) over time.

My original impetus for creating Sudokudos was to create a rating system for Sudoku solvers. Think how chess has Elo (although I need a different approach for Sudoku). Sudoku’s official governing body, the World Puzzle Federation, had also started and then stopped publishing a rating system, providing the opportunity for me to create one. I had been partway through writing a rating algorithm for Sudokudos when life intervened and it joined my long list of dormant side projects.

Until recently, that is. Now if you go to Sudokudos you can see the Ratings page, functional as a beta. The remainder of my algorithm work, and the entirety of writing a new page for it, were all done by Claude Code. You can browse the code and Claude instructions here.

Grade on this project: A

Claude Code was an incredible help through all stages. Construct the different rating algorithms I wanted? No problem. Code up the evaluation framework I had in mind? Easy. Construct all the charts in the style of the rest of my site? Walk in the park. Not only did I have CC write all the code, I had it perform all the analysis that I wanted, with myself as a reviewer.

I have very little criticism of how Claude Code performed on this project. My biggest qualm is that it kept coming back to proposing the same alternative rating algorithms, despite me repeatedly declining those suggestions. Relatedly, at multiple points I could have ended up in a development pendulum, where Claude’s approach to solving problem X leads to a new problem Y that Claude Code wants to fix by going back to the approach that has problem X, ad infinitum. Other than these issues with long-term insight management, development went very smoothly.

I didn’t do any more coding by hand once bringing Claude Code into this project. The most important thing I contributed was judgement. What constitutes a good rating system? How do we evaluate them? When should we opt for complexity or simplicity?

I modified my development approach along the way. Some tactics that I ultimately found helpful:

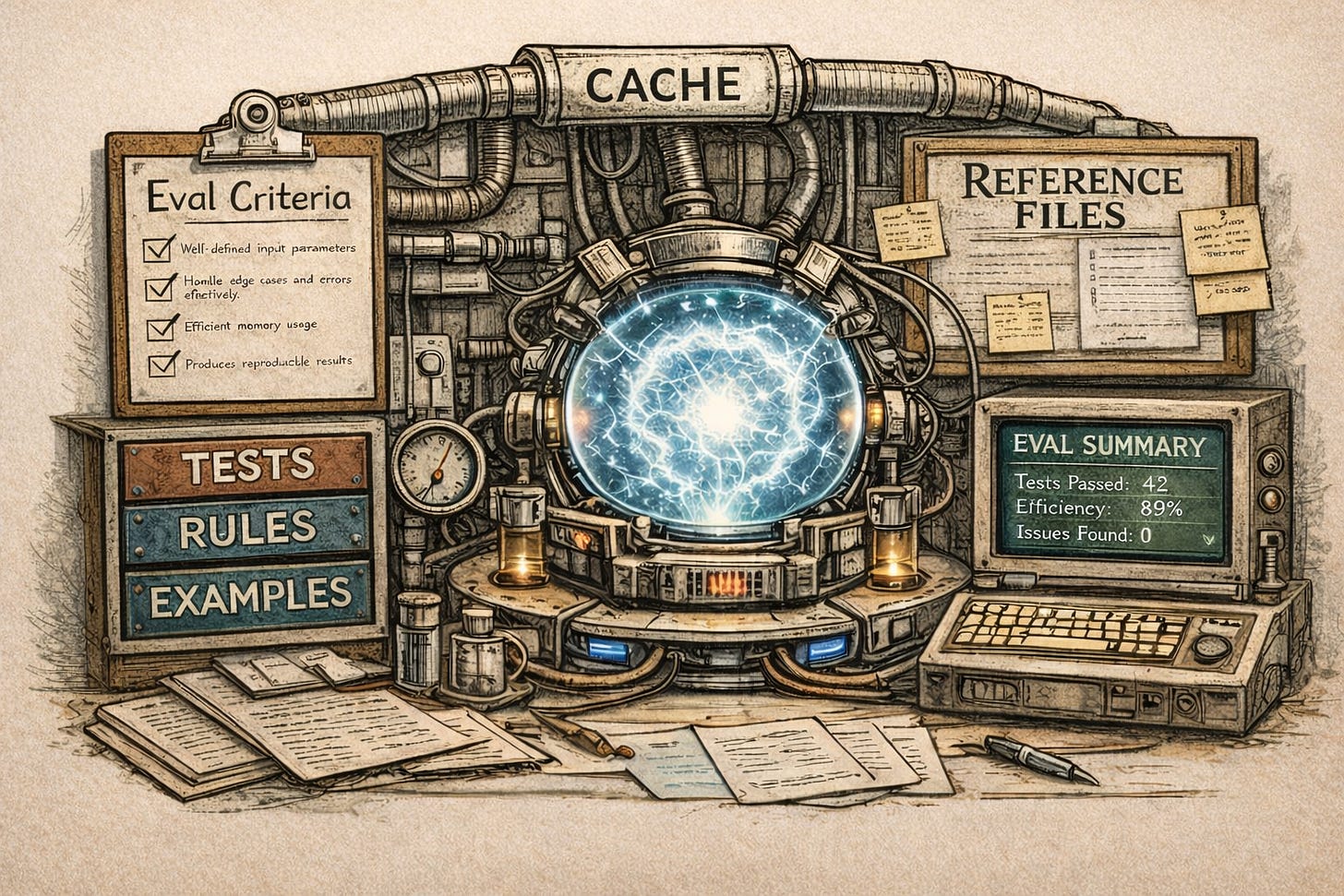

Invest time in encoding evaluation criteria, judgement, and examples into the CLAUDE.md file

Add caching so that slow data steps can be avoided when possible

Design a command line tool to summarize the findings. This is not only generally useful as a tool for myself, but it became very useful for CC to use during its evaluation

Create good unit tests and evaluation methods. Some of the data construction or algorithm fitting can be slow, and data analysis through LLM calls is even slower. We want to avoid hitting those steps if the code itself isn’t in a correct state.

Repeatedly pause development to have CC critically evaluate the code and refactor under my guidance

Here’s a summary of what the command line tool currently offers:

$ python main.py

usage: main.py [-h] {leaderboard,solver,progression,compare,competitions,records,export,cache} ...

Sudoku competition ratings CLI

positional arguments:

{leaderboard,solver,progression,compare,competitions,records,export,cache}

Commands

leaderboard Generate leaderboard

solver Show solver rating progression

progression Show leadership progression over time

compare Compare rating methods

competitions Show competition statistics

records Show career records

export Export rating data for sudokudos website

cache Show or manage data cacheFor this project, it really helped that I had domain knowledge, that I had already built out a lot of code for CC to extrapolate from, and that I knew what I wanted.

I can very much relate to this quote from Ethan Mollick:

But I suspect the people who thrive will be the ones who know what good looks like — and can explain it clearly enough that even an AI can deliver it

Project 2: Research on Canada’s public daycare system

This is a little niche, unless you have an interest in economics or public policy. I was hooked by an excellent post by Noah Smith, Let’s save the human species!. In it he wrestled with how the most direct ideas to solving low fertility and eventual population decline are all limited or partial.

The 5th idea he surveyed was “We can just pay people to have more kids”. It fails because, as he quoted from Lyman Stone, “$23,000 per year, or even $10,000 per year, is an incredibly large sum of money — much more than any current welfare proposals, and enough to require gargantuan tax hikes that would no doubt prove politically toxic”. One of my immediate reactions to that was: that does sound infeasible, but wait, isn’t Canada trying that now?2

Canada has a public daycare system, the Canada-Wide Early Learning and Child Care (CWELCC) program. Under this system a family using a participating daycare might pay around (CAD) $500 per month per child. If you don’t have kids that might sound like a lot, until you realize that private daycares cost on the order of $2,500 per month. Obviously there is a ton of variance by location and other caveats include selection bias and subsidy passthrough, but a bit of back-of-the-envelope math puts that subsidy on the order of ($2,500 - $500) * 12 = $24,000 per year per child.

How in the world can Canada afford anything on that order of magnitude?

I didn’t have the time to do a research project myself, and I figured this would be a nice test of Claude Code on a non-coding task. So I put it to work. I assigned it to search the web for data and sources, consolidate those, and build a repeatable playbook to generate a report. The report should start with basic facts and compound towards an analysis of the daycare program’s economics and future prospects.

Grade on this project: B-

I did learn a lot about the daycare program from this. I knew very little before: the government provides cheap daycare, there are long and uncertain wait times, and there is some uncertainty on the program’s future structure. Now I know details such as the program’s purpose (not explicitly about countering population decline), history (active since 2021), low availability (~32% of children aged 0-5), and that it indeed is a meaningful increase to government outlays (~1.7% of federal government spend).3 I’ll spare you the rest of it or how the research informed my own opinions, but if you’re curious you can read CC’s summary report here.

Unfortunately, CC wasn’t fully dependable on this project. While with software projects it has an inherent understanding of project structure, it had no idea how to document a research project. It almost wanted to just immediately search the web and summarize its sources in one shot and be done with it, just like a conversational LLM like ChatGPT. I had to put some work into getting it to organize sources and decompose its work into (somewhat) repeatable steps. Even when I made progress on that, its folder structure repeatedly got out of hand with duplication or orphaned files.

Worse, the analysis was just not very deep. This is a tricky task where there actually isn’t a lot of published data or analysis on the program, so any new analysis needs to extrapolate a bit from the limited known data while being careful to not overstep with its claims. With primarily government sources to rely on, it should be sufficiently critical of the claims and framing. Instead, Claude Code sycophantically parroted the perspective from government announcements. It didn’t have any instinct on how to critically evaluate a government program. Even now after I tried to drill some skeptical thinking into it, you can see how the report is relatively superficial compared to what a good economist would create. That’s after multiple passes of giving feedback and instructing CC to make its guidelines more nuanced.

I now have some practices that I’ll consider for future research projects:

Give extensive permissions up front, to avoid repetitive pauses when asking for downloading permissions from each site

Add download caching

Design tiers of data analysis: raw data (cached), raw claims, and summarized documents

Create clear criteria for how to critically evaluate hypotheses

Maintain instructions for what the final reports should look like, for repeatability

Add validation tests

I decided to create a main.py which outputs instructions to paste into Claude Code (rather than only running code, since in this project Claude Code needs to use its LLM capabilities to repeat the analysis). I’m not sure if I’ll do that next time, but it’s helpful for me to have that as an entry point into using the project.

While this project had mixed results, the output is impressive compared to the limited amount of time I put into it. Even if this felt like an uphill battle, it was still a huge time saver. I expect that I can make Claude Code better at this and other similar tasks through better prompting and use of multiple agents. If I was an active social scientist with multiple projects I would invest more in finding a better workflow (consider, for example, Chris Blattman’s). I’m looking forward to the next time I have a qualitative research project where I can test out an evolved approach.

Project 3: Data analysis tool (for work)

I used to do a lot of data analysis as part of my work, but have done less over time in the years since becoming a manager. Since I’m not a specialist in data analysis at my company and need to do it only occasionally as one of many of my activities, I need tooling that can work around the knowledge gaps that I have accumulated.

If I can make something that works for me, it could democratize data work more broadly while also sharply reducing the amount of mundane work that data scientists have to work through on their way to creative and insightful analysis. With the emergence of agentic AI tools I see the opportunity for a much higher volume of quality data analysis.

To be effective at data analysis, I need at least three prerequisites:

Business domain knowledge: How does the product work, what is its terminology, and what is the history of key business changes?

Data layout: What are the tables and their fields?

Tools for querying and visualization

That’s just a starting point. Highly effective data analysis also requires creativity, judgement, and ingenuity. Yet I had fallen behind on all three, and that had stopped me from doing any supplemental data analysis lately.4

I’m taking control back. I built a Claude Code project where I am embedding domain knowledge about the business and context on our tables. I had CC bootstrap this itself by having it scan our wiki; analyze our database hierarchy, schemas, metadata, and usage patterns; and ask me questions through the process.5 I guided it through the building of Python code for visualizing key metrics that I cared about, in ways that are tuned based on our business patterns (e.g. the specific ways that seasonality affects us). I asked it to build reports about revenue and our conversion funnel, and I documented more domain knowledge whenever I found its emphasis misguided or lacking in domain knowledge on key events.

Grade on this project: B+

CC was effective at interpreting our data architecture, querying with SQL, creating the charts that I wanted, and modifying those charts as I requested. It actually had a decent ability to critically evaluate the business, with much better judgement than for the daycare program analysis. It also had a better sense of an appropriate project structure, quickly deciding on folders for docs, scripts, views, procedures, queries, and outputs. BigQuery command line tools were essential, and Claude Code used them effectively.

One challenge I’m still exploring: with accumulating so much domain knowledge and instructions, how can I have Claude Code consistently process the right markdown files and reliably follow instructions? I’m still unsure on which content I’ll have in the main CLAUDE.md, subdirectory CLAUDE.md files, rules, skills, and other documentation files. Ultimately the key to this project is optimal context management to elicit ideal behavior from the underlying LLM.

I plan to make this tool much more powerful, through continued usage. Every time I learn a new nuance of our business or “gotcha” in our data, I can tell CC to update its own markdown accordingly. I envision a project where my colleagues and I can quickly address new data questions that we have, and where CC can be a thought partner for analyzing the business.

10 pointers for effective projects

These three projects have helped me see some patterns in analysis work using Claude Code, and helped me disabuse myself of some false patterns. One principle I’m sticking with: Invest in making the tool more effective at my problem. That can be through clear CLAUDE.md instructions, skills or rules files, unit tests, caching, writing command line programs for it to use, and so forth. Even more specifically, as Steve Newman put it, “the key is putting the agent in a position to check its own work”.

That’s a theme that connects most of my suggestions:

Create good unit tests and evaluation methods

Add caching so that slow steps can be avoided when possible

Design tiers of data analysis so that Claude Code doesn’t have to repeatedly, expensively, and unreliably one-shot the analysis after each change

Invest time in encoding evaluation criteria, judgement, and examples into the reference files

Manage context through multiple types of files (e.g. CLAUDE.md, rules, docs, etc.), when necessary

Maintain instructions for what the qualitative outputs should look like, for repeatability

Design a command line tool to summarize the findings, which may be useful for Claude Code to use for repeatable evaluation

Regularly pause development to have Claude Code critically evaluate the project and refactor

Give extensive permissions up front

Use Claude Code as a thought partner, both to clarify our own thinking and to reveal where its instructions are poorly specified

Where data projects often differ from pure coding projects is that a good output might be hard to specify and evaluate. We might need qualitative judgement to evaluate whether our project is successful. Because of that, it’s particularly important to give good instructions and to have concrete quality checks where possible.

I’m still so far from mastering the tools as they exist right now, let alone what they will evolve into. Yet already I’ve entirely changed how I work. There’s no going back.

If you don’t want to commit to paying for Claude Code and you’re okay with a slightly less polished experience, there are some free models you can use in OpenCode.

My other immediate reaction: Of course we have to pay people an immense amount of money if we actually want to affect their childbearing propensity. Children are incredibly expensive, plus people have all kinds of non-monetary reasons or situations that might hold them back from wanting and/or having kids. Anybody thinking incentives could be cheap are deluding themselves.

That’s a lower increase in budget than the Lyman Stone numbers that Noah Smith cites. Some leading factors for that could be Canada’s program only applying for early years, it having low coverage even of those years, and the slightly lower percentage of children in Canada compared to the US.

Specifically on why I fell behind: 1) I am a little fuzzy on the precise definitions of many of the more recent concepts that we’ve introduced into our business and of our terminology changes; 2) Our data layout has changed substantially, becoming more complex as we created new polished tables while still requiring many of our older ones too; and 3) We removed our primary tool for querying and visualization, replacing it with a more complex one that (furthermore) has a tiered system of artifacts and permissions

Huge kudos to my amazing colleagues in our Developer Experience team for integrating MCP servers and other techniques to extend our AI tooling. In this rapidly changing environment they’ve been able to deliver new capabilities even before people demand them.

The sudoku rating system is a great test case. Domain knowledge plus Claude Code is where the real leverage sits, and you've demonstrated that well with the evaluation framework approach.

Your point about the development pendulum rings true. I've hit that exact loop where the model keeps proposing the same fix you've already rejected. One thing that's helped me is swapping models mid-session. Different models have different blind spots, so when Claude gets stuck in a rut on something, switching to GPT-5.4 or Gemini sometimes breaks the cycle. Wrote up how to do that without leaving Claude Code here https://reading.sh/claude-code-how-to-run-any-model-gpt-5x-gemini-3-1-stealth-inside-it-e67e957e53c3

Have you tried any other models for the data work or have you stuck with Claude exclusively?